Model-Based Continuous Review

Model-Based Continuous Review

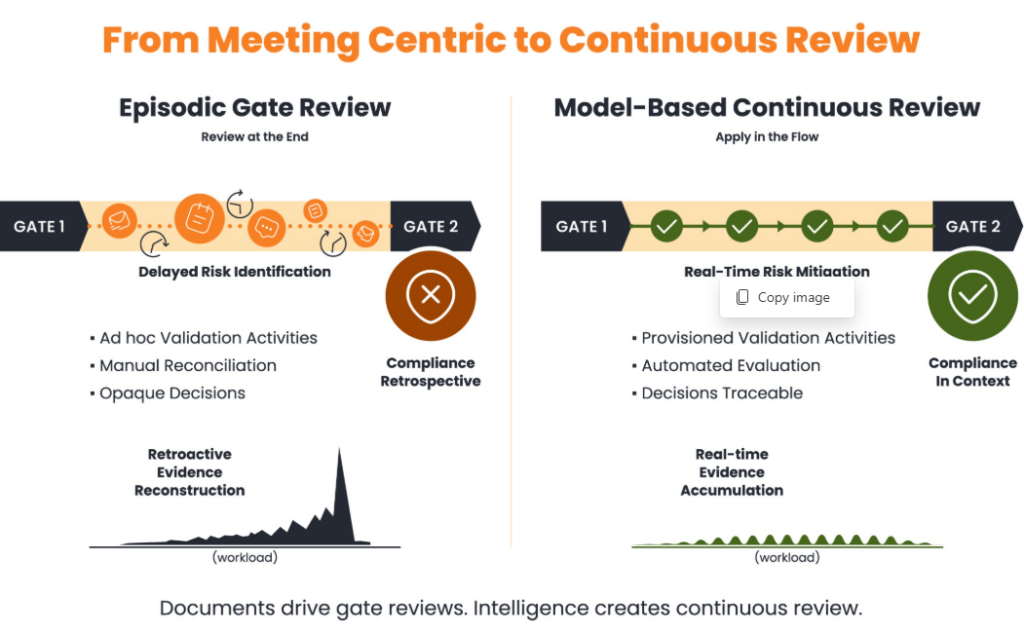

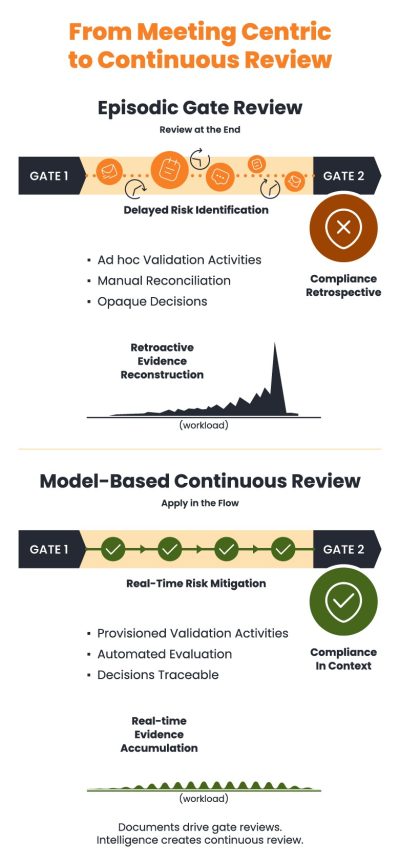

Prior to AurosIQ, design reviews at an automotive OEM were milestone meetings. The dimensions of the meeting (what topics, who must attend, what materials were to be reviewed, etc.) were part of written instructions. Timing of events was the only automated element of the process. Teams would then attach document-based evidence into the timing system to show progress. Lead engineers would spend most of their time preparing for the phase-gate review, collecting evidence and building reports style documentation.

With AurosIQ, their review starts at inception and is evaluated throughout the phase – the full team monitors and visualizes progress continuously. Evidence is captured in-process and accumulated rather than reconstructed at the end. Cognition models and intelligent parameter threads enable contextual provisioning and automated evaluation. Checklists, pass/fail statuses, auto-generated issues, and decision logs emerge organically during the phase. Phase-gate reviews driven by static data, documents, and milestones have been replaced by Model-Based Continuous Reviews.

Prior to AurosIQ, design reviews at an automotive OEM were milestone meetings. The dimensions of the meeting (what topics, who must attend, what materials were to be reviewed, etc.) were part of written instructions. Timing of events was the only automated element of the process. Teams would then attach document-based evidence into the timing system to show progress. Lead engineers would spend most of their time preparing for the phase-gate review, collecting evidence and building reports style documentation.

With AurosIQ, their review starts at inception and is evaluated throughout the phase – the full team monitors and visualizes progress continuously. Evidence is captured in-process and accumulated rather than reconstructed at the end. Cognition models and intelligent parameter threads enable contextual provisioning and automated evaluation. Checklists, pass/fail statuses, auto-generated issues, and decision logs emerge organically during the phase. Phase-gate reviews driven by static data, documents, and milestones have been replaced by Model-Based Continuous Reviews.

Milestone Reviews are some of the most impactful and commonly executed processes in engineering and manufacturing workflows. Some reviews involve all the stakeholders and focus on alignment and buy-in. Some others are peer reviews with emphasis on expert feedback and technical validation. And they span every stage of a product lifecycle from preliminary design to production readiness and beyond.

During the past decade advances in collaborative software tools, and asynchronous design reviews have contributed meaningful improvements to review effectiveness and productivity. Data is now active and can be shared, viewed, and edited in real-time; tabulated, graphed, zoomed, panned, and visualized in different ways. Messaging is in-process and direct, and comment history is maintained in context. All valuable additions.

But despite the progress in data handling and visualization, one thing remained constant. Data, documents, timelines, and milestones were still the dominant drivers, and they cause all reviews to face the same failure modes.

Much of this is attributable to the same root causes. The underlying content is static text and data. The what, the who, and when without the how and the why. The format is episodic, tied to specific product and process milestones. And the review process is managed rather than executed.

The Solution

Model-Based Cognition changes that. The content space is vastly enriched with cognition models – that encode logic, constraints, parameters – and are provisioned dynamically based on context. This ensures that reviews are continuously executed against curated, precise content that is relevant to the specific project and the specific moment in time. Further, orchestration models in AurosIQ execute sequences of phases with continuous reviews. Within each phase, models automatically pull data from previous phases and from other specialized tools – CAD, PLM, CAE, bench testing, customer built IAPs – and evaluate it in context. In other words, continuous review executes against live, connected logic.

Intelligent Parameter Threading ensures that parameters are reused across phases, sub-gates, reviews, and validation models – forming dynamic contextual links between otherwise discrete activities. This threading creates a continuous, evaluable logic layer that spans the entire process. A change in a requirement, test result, design attribute, or risk condition automatically propagates through every dependent model, eliminating the need for manual reconciliation. Instead of each phase operating as an isolated milestone with its own reconstructed evidence, the entire lifecycle becomes one connected cognitive structure. The process is no longer a sequence of managed gates; it is a continuously executed system of shared, parameterized logic.

The Benefits

All milestone reviews seek the same benefits – conformance with requirements & specifications, effective reuse of IP, compliance with standards and quality metrics, risk mitigation, cost reduction, and process improvement. Model-Based Continuous Review makes these goals achievable.

And all these gains are made while spending far less time on the review process itself. In the words of a Technical Lead at GM, speaking at the AurosIQ user conference in October 2024, “Peer reviews that took up to 30 hours are now taking maybe, a maximum of 4 hours. Typically, about 2 hours.”

Insert text to encourage the exploration of other use cases using the use case wheel.